A compelling, user-friendly website is the backbone of an organization’s presence online. As a communications professional, I’ll be the first to testify to the power of social media and email, but your audience really needs a cohesive, one-stop-shop location to learn more about your organization and what you have to offer them.

So what should you do when the feedback you receive about your website is lackluster? Users might feel overwhelmed by the number of menu options or underwhelmed by the visual design. They might have a difficult time figuring out what your mission is or where to purchase a product. Or maybe you actually don’t have any insight into what your audience thinks of your website.

Whatever the feedback – or lack thereof – it’s important to accept it with an open mind. Feedback is a gift. And don’t fret; there are plenty of resources available to improve your website!

But ultimately, of course, the best way to understand the people who use your website is, simply, to listen to them.

Last year, LUMA’s marketing team set out to make some improvements to luma-institute.com. As a company built on the belief that innovating and iterating are imperative, we knew our own website needed a refresh.

We began with Statement Starters: how might we better serve the people who visit our website? How might we make information more accessible and understandable? We put everything under the microscope: from the visual design to the main menu navigation.

We used over a dozen human-centered design methods throughout the project. But today I want to focus on one powerful method in particular: Think-aloud Testing, a method that invites people to narrate their experience while performing a given task.

This method yields some important benefits:

- Reveals what people are thinking.

- Deepens your empathy for others.

- Uncovers opportunities for improvement.

- Lowers development costs through early discovery.

The key to running effective Think-aloud Tests is being well-prepared – set up your participants for success so they feel comfortable giving you honest feedback. You’ll want to do some testing in advance to make sure any technology you’ll need is working properly and any processes you’ll use will flow in an understandable way.

Let’s walk through each step in the quick guide for this method and talk about how it can benefit your website (or any project):

- Identify what you will be testing and a few key tasks.

- Invite 6-9 different people to be the respondents.

- Schedule a testing session with each person.

- Introduce yourself and the purpose. Obtain consent.

- Remind each respondent, “We are NOT testing you.”

- Instruct them to conduct each task one at a time.

- Ask them to think aloud.

- Keep quiet, listen carefully, and take good notes.

- Thank each participant.

Identify what you will be testing and a few key tasks.

Don’t set the scope too large or too small. Your participants will want to feel productive and helpful, but they also won’t want to be overwhelmed with too many tasks. For our project, we created a mockup of the changes we were prototyping for the website. Then, as a team, we picked a few specific tasks for the participants to perform: Show us how you would get to the Our Approach page. How would you navigate back to the home page from here? Tell us where you would go to find information about our training offerings.

Invite 6-9 different people to be the respondents.

It’s important to maintain a balance here: don’t exclusively invite people who are already familiar with your organization or vice versa. Among the eight people we invited to test our website mockup, five were internal teammates, but one was brand-new to the company. Each employee worked on a different team – ranging from client success to finance to C-suite – so they each brought a different perspective to their test.

The three external testers each had a different level of knowledge about LUMA – ranging from someone who had taken workshops with us to someone who knew barely anything about us or our offerings.

All eight participants varied in terms of their genders, races, ages, and industries. A diverse set of participants will yield a diverse set of feedback.

When you invite people to participate, make sure you set expectations properly: How long will it take to complete the test? Do they need to prepare anything in advance? What tools will you use to facilitate the test – Zoom, Microsoft Teams, etc. – and how do they access them? Try to answer these questions up front to eliminate any stress on their part.

Schedule a testing session with each person.

One at a time! This will ensure each participant’s voice is heard and their time is respected. Be sure to clearly and accurately timebox the activity based on how long it took to do a dry-run of the test.

Once you have a mockup or prototype, schedule testing as early on in the project as possible. The prototype doesn’t need to be a perfect or complete idea to be tested! And the sooner you identify opportunities for improvement, the easier they’ll be to address.

Introduce yourself and the purpose. Obtain consent.

Keep things light and friendly! Your participants may be a bit nervous because they heard the word “test.” Make sure they’re clear on what they’re going to do: list your goals for the test and remind them how much time you have reserved. And if you’re recording the conversation, be sure to get their consent.

Remind each respondent, “We are NOT testing you.”

Assure the participant there are no right or wrong answers. Let them know that if something breaks – and it probably will since it’s only a prototype! – it’s not their fault. We used a staging version of our website during testing, and each participant discovered at least one error. We viewed it as bonus feedback. It’s always better to find those unexpected flaws during testing rather than after the project is live.

Instruct them to conduct each task one at a time.

Provide clear, straightforward instructions. If they unknowingly complete two tasks at once, don’t worry! Employ a bit of improv and skip to the next task that makes the most sense. And if you’re finding that participants get easily distracted along the way, perhaps that’s a sign they may feel overwhelmed by the number of menu options or visual design.

Ask them to think aloud.

We always ask participants to “put your brain on speakerphone.” We wanted to hear everything going through their minds as they navigated a given task. For example, one participant identified two different routes to get to the same page. They explained why they chose one route over the other because of its visual design, which helped us understand more about their experience. We also learned that many participants scrolled to the bottom of the page to find the website footer, rather than navigating through the home page – they wanted a text-only, straightforward map of each page on the site.

Keep quiet, listen carefully, and take good notes.

Once you’ve given participants their instructions for a task, try to take a passive role, sit back, and listen closely. Ask open-ended questions. We recommend recruiting multiple people to run the test: one facilitator to ask questions; one person to oversee the technology; and one notetaker. If you’re a team of one, try to do as much prep work as possible to avoid losing focus on the most important thing: your participant.

Thank each participant.

Express your gratitude for their time. Some organizations will offer testing participants a small gift, or you could offer to return the favor and test something for them. Regardless, make sure they know you appreciate their efforts to help you improve your website.

What’s next?

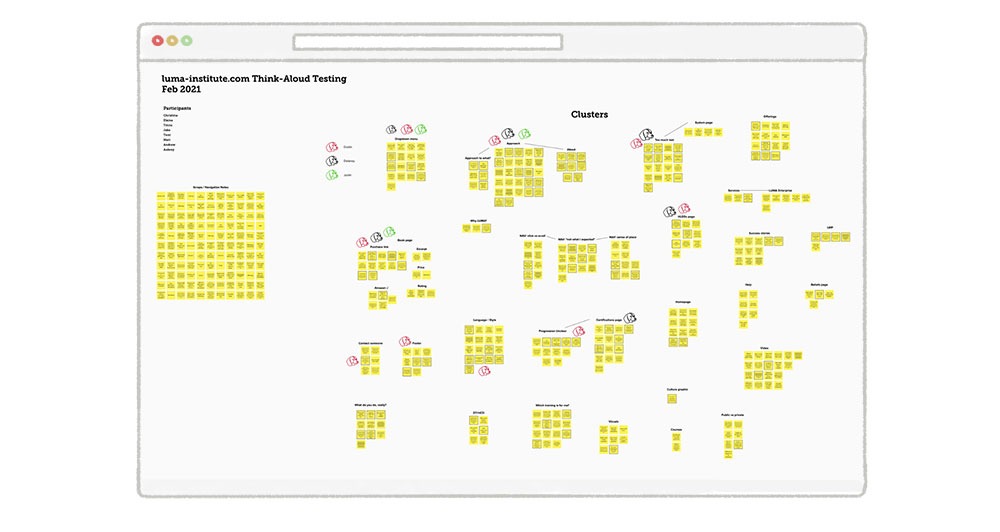

After you’ve completed your tests, you’ll (hopefully) have plenty of feedback to organize! We used sticky notes within MURAL, a digital whiteboard, to keep track of each piece of feedback. This environment set us up nicely to jump into another method: Affinity Clustering, which is a technique for sorting items according to similarity. We noticed plenty of themes within our user feedback, but we focused on a few in particular because of the amount of feedback within those areas.

You can see we used another method, Visualize the Vote, to prioritize the themes. (We used color-coded dog icons to represent my favorite animal, but you can use whatever you like!)

For example, in our case, only one participant called attention to a specific graphic we included, but all eight participants made comments about the main menu navigation being confusing. This made it clear that we definitely needed to focus some energy on the menu.

Remember, you may not be able to accommodate every single piece of feedback from the tests, but make time to address the most common points before you launch, and then return to your notes for future opportunities.

A powerful method for evaluating experiences

While it may feel overwhelming to think about adding another step to large projects, taking the time to conduct Think-aloud Tests gives you the opportunity to more deeply understand your users. With some effective time-boxing and strategic preparation, we were able to conduct all eight tests within two working days! Our team was so glad we decided to commit the time and effort to collect feedback from our participants – our website is so much better for it. We hope you’ll find this method useful as a way to evaluate prototypes and make products, experiences, and offerings better.